Your data science team spent three months building a demand forecasting model. The proof-of-concept looked sharp. The demo landed well with leadership. Then they tried running it against production data from your JD Edwards instance, and it fell apart fast. Date fields formatted differently across two plants. Product codes that mean different things in warehouse operations versus the finance team. Two years of transaction history locked in a legacy ERP nobody exports from anymore.

The model wasn’t wrong. The data was.

Key Insights: What You Need to Know About Data AI Readiness

- Most enterprises aren’t ready: Only 7% of enterprises report their data is completely ready for AI (Cloudera/HBR, 2026), meaning the overwhelming majority are building AI initiatives on a broken foundation.

- Project abandonment is rampant: S&P Global Market Intelligence reported in 2025 that 42% of companies were abandoning the majority of their AI initiatives before reaching production, up from 17% the prior year.

- Data quality isn’t prioritized enough: 73% of respondents say their organization should prioritize AI data quality more than it currently does (Cloudera/HBR, 2026), while siloed data (56%) and lack of a clear data strategy (44%) rank as the top obstacles to AI readiness.

- Redesigning workflows first is a key differentiator: McKinsey’s 2025 State of AI found that high performers were nearly three times as likely to have fundamentally redesigned workflows, and that strong technology and data infrastructure were among the practices most associated with meaningful AI value.

- ERP data is the primary gap: Most enterprise data AI readiness failures trace back to disconnected JD Edwards, NetSuite, Vista, or OneStream environments with no unified data layer.

- A data lakehouse changes the equation: Modern lakehouse architecture on Microsoft Fabric or Databricks serves BI reporting and AI/ML workloads from one governed foundation, no separate infrastructure build required.

- Production-ready doesn’t have to take years: With pre-built Application Intelligence and certified ERP connectors, an AI-ready data foundation can be live in 8-12 weeks.

This is the pattern behind most enterprise AI failures. Not a bad algorithm. Not an oversold vendor. A data foundation that was never designed to support machine learning requirements, and nobody discovered that until the most expensive possible moment, after months of data science work pointed at the wrong target.

Data AI readiness is the degree to which an organization’s data meets the quality, governance, architecture, and integration standards that AI and machine learning models need to deliver reliable results. An enterprise with strong data AI readiness has connected, consistently defined, and governed data across its core systems (ERP, CRM, financial planning, and operational platforms) stored in an architecture that supports both analytical reporting and AI training workloads simultaneously. Organizations without this foundation can run AI experiments. They can’t run AI in production.

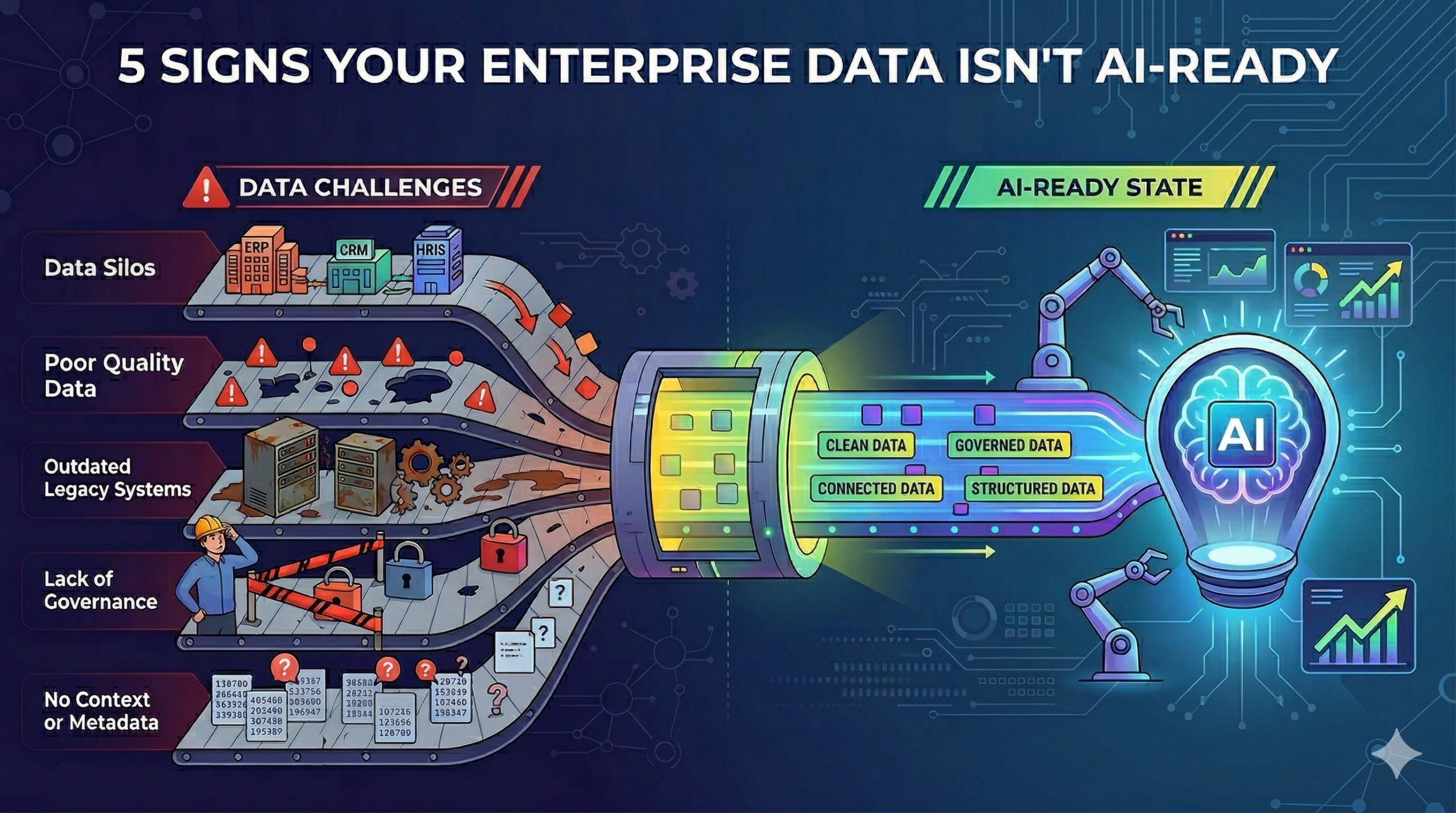

According to Cloudera and Harvard Business Review, only 7% of enterprises describe their data as completely ready for AI. The other 93% are on a spectrum from “addressable gaps” to “fundamental rework required.” The Building AI That Works: The AI Readiness Playbook, co-authored by QuickLaunch Analytics and Fivetran, organizes data AI readiness into five measurable dimensions (data integration maturity, data quality and governance, infrastructure and architecture, organizational readiness, and use case clarity) that determine whether an enterprise data environment can support production AI. The five signs below map directly to where most organizations score lowest.

Your Critical Data Lives in Disconnected Systems

Fragmentation is the most reliable indicator of poor data AI readiness. Customer records in Salesforce. Financial data in JD Edwards or NetSuite. Project costs in a Vista ERP. Operational metrics in a separate data warehouse that nobody fully trusts. Each system accurate on its own terms. None of them talking to the others.

AI models discover patterns by analyzing relationships across data domains at the same time. A demand forecasting model needs purchasing history, inventory levels, seasonal trends, and supplier lead times together in one clean dataset, not in four systems requiring manual joins and daily reconciliation. A customer churn model needs support ticket history, product usage, billing patterns, and sales interactions combined. When that data lives in disconnected systems with no unified layer, there’s no practical way to build that view at the quality or scale machine learning data requirements demand.

In a 2024 survey of 300 C-suite executives by MIT Technology Review and Fivetran, 77% agreed that data integration and movement are a major challenge for their organizations. The AI Readiness Playbook reports that data integration and pipelines ranked as the number one challenge in preparing data for AI, cited by 45% of respondents, ahead of data governance (44%) and data quality (43%). Nearly three-quarters of enterprises manage or plan to manage more than 500 data sources. At that volume, manual integration is not just slow. It’s impractical.

The diagnostic question: how long does it take to combine data from your ERP and your CRM into a single coherent analysis? If the answer involves spreadsheets, email requests, or more than a few hours of data prep work, fragmentation is the problem. And cleaning the individual systems won’t fix it. The solution is building the integration layer: automated pipelines that connect source systems to a centralized data lakehouse where AI and BI tools can both read from a single governed source.

This is also where data silos and AI outcomes are most directly connected. Data silos don’t just slow analytics. They make the cross-functional training datasets that power enterprise AI literally impossible to build cleanly at scale.

Your Team Spends More Time Cleaning Data Than Using It

There’s a signal hiding in your analysts’ weekly schedule. Ask them to estimate what percentage of their time goes to data preparation (finding records, fixing formats, deduplicating entries, reconciling field definitions) versus actual analysis and decision support. For most enterprise analytics teams working with raw ERP data, that number runs between 40% and 70%.

The AI Readiness Playbook puts a sharper number on this problem: in the Fivetran/Redpoint AI & Data Readiness survey, 67% of enterprises that had centralized more than half their data still spent over 80% of their data engineering resources maintaining pipelines. That’s not a training issue or a staffing problem. It’s a trap. Your team can’t build AI capabilities because they’re too busy keeping the lights on.

That ratio is a direct measurement of data AI readiness. An AI model can’t clean its own training data. It can’t recognize that “ship-to date” means something different in a JDE F4201 table than in the format your finance team uses for revenue recognition. It trains on whatever it receives, and if what it receives is inconsistent, the model will produce outputs that are confidently, systematically wrong. High data preparation overhead isn’t just an analyst productivity problem. It means your data isn’t ready for machine learning at all.

The deeper issue is structural. High cleaning overhead almost always points to upstream failures: no quality rules enforced at ingestion, no automated validation pipelines, ERP fields that got repurposed over time without documentation (any shop running JD Edwards EnterpriseOne has likely seen F0101 used for purposes far outside its original design). Asking analysts to clean more carefully doesn’t address any of that. Real machine learning data requirements start with automated quality at the pipeline level, governed ingestion with validation rules, not heroic manual correction downstream.

Forward-thinking teams are also adopting data contracts to formalize the agreements between source system owners and downstream consumers. A data contract specifies the schema, freshness, and quality guarantees a source system commits to delivering. When a JDE administrator adds a column or changes a field type, the contract flags the break before it silently corrupts a downstream model. Data contracts turn definition consistency from a manual coordination problem into an enforceable standard.

AI and ML Experiments Never Make It Out of the Lab

Most organizations can point to at least one AI proof-of-concept that performed well in demo conditions and then stalled before production deployment. Usually more than one. And the explanation tracks to the same root cause every time. The demo dataset was curated. The production data wasn’t.

Demo and POC datasets get hand-picked. They’re samples with consistent formats, normalized field names, and no gaps in historical records. Production enterprise data has three years of field name changes from a system upgrade, a plant acquisition that added new cost centers nobody fully mapped into the existing chart of accounts, and a fiscal year transition that broke continuity in historical purchasing data. An ML model trained on the clean sample runs into that production reality and produces results that contradict what operations teams know from direct experience, destroying confidence in the model before it has a chance to prove itself.

One specific failure pattern here is training-serving skew. During the POC, a data scientist computes features like rolling 90-day purchase averages or supplier reliability scores in a notebook using one set of logic. When the model moves to production, a different pipeline recomputes those same features with slightly different logic, different join order, different null handling, different date boundaries. The model receives inputs that look structurally identical but are computed differently than what it was trained on. Predictions degrade, and nobody can explain why because the schema looks correct. Feature stores and governed transformation layers exist specifically to prevent this class of silent failure.

“The gap between AI ambition and AI execution is real, it’s expensive, and it’s growing.”

Building AI That Works: The AI Readiness PlaybookResearch reinforces the scale of the problem. Gartner’s 2021 AI in Organizations Survey found that roughly half of AI projects (54%) successfully move from pilot to production, a number that hasn’t materially improved since. S&P Global’s 2025 data puts the picture in starker terms: 42% of companies are now abandoning the majority of their AI initiatives before deployment, up from 17% the prior year.

Better data scientists won’t solve this. Making the production data environment match what machine learning actually requires will. That means consistent schema, reliable and deep history, governed metric definitions applied uniformly across all source systems. Solving this once solves it for every future AI initiative. But it requires treating your data environment as infrastructure that needs to be built to support AI, not as a resource to be gathered on demand when each new model needs it.

You Can’t Trace Where a Business Number Comes From

Ask a specific question to your team: if a Power BI dashboard shows gross margin at 34.2%, can anyone trace exactly which ERP tables fed that number, which transformation logic produced it, and which business rules defined “gross margin” in that calculation? If that question produces uncertainty, contradictory answers from different team members, or a multi-day research exercise to answer, you have a data governance for AI gap.

For AI specifically, data lineage matters in both directions. Training direction: when an AI model produces an inaccurate prediction, can you identify which data records contributed to that output and whether those records were correct? Debugging a misbehaving model without lineage is guesswork. Production direction: all models experience performance degradation over time as input data distributions shift. Without lineage, you can’t distinguish between “the model needs retraining” and “the data pipeline changed in a way that broke the model’s assumptions.” Both look identical from the output, but the fixes are completely different.

The industry term for the production-side discipline is model observability: monitoring model inputs, outputs, and performance continuously after deployment. Platforms like Databricks MLflow and Google Vertex AI build this into their ML serving infrastructure. Without it, you’re flying blind. A model can degrade for weeks before anyone notices, and by then the business decisions made on its outputs are already in motion.

Databricks reported in its 2026 State of AI Agents research that organizations using unified governance tooling were deploying far more AI projects to production than average, underscoring how tightly governance and productionization are linked. That’s the clearest signal in the research on what separates organizations getting AI agents into production from those still running pilots a year later.

Data governance for AI also prevents the slow decay that happens to well-built data foundations over time. New source systems get added without governance review. ERP fields get modified for operational reasons without analytics documentation. Business units develop their own metric calculations that diverge from the enterprise standard. Each change is individually minor. Collectively, they erode the clean, consistent data environment the AI models were trained on, until the model outputs no longer reflect business reality and nobody can explain why.

Your BI Reports and AI Outputs Tell Different Stories

Nothing damages organizational trust in AI faster than contradictory numbers. Your established Power BI report shows revenue up 11%. The AI-powered forecasting tool says demand is trending down. Nobody in the room can immediately explain why they differ.

This happens when AI tools connect to different data sources than the core BI platform. A vendor demo environment hooked directly to a staging database. A predictive analytics tool set up without access to complete ERP history. A machine learning pipeline that uses “customer” as defined in the CRM while the BI platform uses “customer” as defined in finance, two different structures, two different counts, two different pictures of the same business.

“If your BI dashboards pull from one set of tables and your AI models train on a different copy of the same data, you will get different answers. It’s not a matter of if. It’s a matter of when. And when it happens, it destroys trust in both systems.”

Building AI That Works: The AI Readiness PlaybookThe technical cause is the absence of an AI-ready data foundation that all tools read from. A proper foundation isn’t separate data environments for different tools. It’s one governed, centralized data lakehouse architecture, bronze for raw source data, silver for cleaned and standardized records, gold for business-ready datasets, where every tool reads from the same source of truth with the same metric definitions applied consistently.

When BI Reports and AI Outputs Conflict: Symptoms, Causes, and Fixes

| Symptom | Root Cause | What Fixes It |

|---|---|---|

| BI report and AI model show different revenue numbers | Different data sources or metric definitions | Single governed semantic layer with one “revenue” definition |

| AI forecast contradicts operational dashboards | AI tool trained on incomplete or stale ERP history | Unified lakehouse with full historical depth across bronze/silver/gold layers |

| Leadership doesn’t trust AI outputs | No way to trace how the AI arrived at its answer | End-to-end data lineage from source system through model output |

| Each department gets different answers from the same AI tool | No enterprise-wide metric governance | Pre-built enterprise semantic model with standardized business logic |

| AI model accuracy degrades over time without explanation | No monitoring for data drift or schema changes in production | Automated drift detection with alerts when input distributions shift |

Conflicting outputs from different tools is also a prompt for a specific conversation: does your organization have a single, authoritative definition for each major business metric (revenue, margin, customer count, units shipped) that every data tool uses? If the answer is no, AI outputs will always conflict with BI reporting, and that conflict will systematically undermine AI adoption.

When These Signs Might Not Mean What You Think

Not every data challenge is a data AI readiness problem. Organizations deploying pre-trained large language models for internal document search or knowledge retrieval (RAG-based chatbots) face different requirements than those building predictive models on ERP transaction history. A chatbot needs governed access and data freshness but doesn’t need five years of historical continuity. Similarly, rule-based automation projects (automated invoice matching, simple classification tasks) can operate with looser governance than demand forecasting or anomaly detection models.

The five signs above apply most directly to organizations building predictive, prescriptive, or agentic AI on enterprise operational data. If your first AI project is narrower than that, start with the signs most relevant to your use case. But if you’re planning to scale AI across multiple business functions over the next 12-18 months, all five will need to be addressed, and addressing them in sequence, starting with integration, is faster than trying to fix everything in parallel.

Your Data AI Readiness Action Plan

Recognizing these signs is step one. Fixing them requires treating your data foundation as infrastructure, not as a cleanup project that runs alongside everything else.

The organizations that make AI work follow a deliberate sequence. Connect data across all source systems through automated, governed pipelines. Centralize it in a data lakehouse architecture with progressive refinement layers. Build a semantic layer that standardizes business logic (revenue, margin, customer definitions) enterprise-wide so every tool reads the same version of truth. The AI Readiness Playbook identifies these as the three foundations every AI platform needs: automated data movement, governed lakehouse architecture, and a trusted enterprise semantic layer. Each one addresses specific dimensions of data AI readiness, and each one makes the next one easier to build.

The Playbook also offers a practical 90-day roadmap organized by maturity stage. For organizations at early maturity, the focus is getting 3-5 critical data sources flowing into a cloud lakehouse within the first 30 days. For mid-maturity teams with centralized data, the priority shifts to building out the semantic layer with AI-readiness metadata. For advanced organizations already running BI on a lakehouse, the 90-day plan centers on deploying evaluation frameworks and getting the first AI workload into production with full governance.

For most enterprises, a full custom build of this foundation takes 12-24 months. But organizations running JD Edwards, NetSuite, Vista, or OneStream don’t have to build from scratch. Pre-built Application Intelligence with certified ERP connectors has already solved the ERP-specific data mapping problems (the JDE field translations, the NetSuite multi-subsidiary consolidation logic, the Vista job cost structures) that consume most of the custom build timeline. The result: a production-ready AI-ready data foundation in 8-12 weeks.

Once their JD Edwards and manufacturing data was unified in a QuickLaunch-powered lakehouse, IGI Wax applied machine learning models that reduced manufacturing waste from 8.5% to under 3%, delivering $8-10 million in increased annual profit.

Check Your Data AI Readiness

For a deeper look at the three foundations every AI platform needs (automated data movement, governed lakehouse architecture, and a trusted enterprise semantic layer), plus a practical 90-day roadmap organized by maturity stage, download the Building AI That Works: The AI Readiness Playbook, co-authored by QuickLaunch Analytics and Fivetran.

Download the AI Readiness PlaybookFrequently Asked Questions

What does it mean for data to be AI-ready?

AI-ready data meets the specific quality, governance, architecture, and integration requirements that ML models need to produce reliable results in production. That means accurately captured, consistently defined across all source systems, accessible through automated pipelines, and stored in an architecture that supports both reporting and ML training from the same governed environment. For most enterprises, the gap comes down to three structural issues: fragmentation across disconnected systems, inconsistent metric definitions between departments, and no governance framework maintaining quality over time. A data lakehouse with a standardized enterprise semantic model addresses all three.

How do you assess enterprise data AI readiness?

Assess five dimensions: data integration (can you combine data from ERP, CRM, and financial systems automatically?), data quality (what proportion of analyst time goes to cleaning versus analysis?), metric governance (does every department use identical definitions for core metrics?), data lineage (can you trace any reported number back to its source?), and architecture readiness (does your current infrastructure support ML workloads alongside BI without a separate build?). The AI Readiness Playbook provides a scored assessment framework across all five dimensions that maps gaps to a prioritized remediation plan.

What are the most common data readiness gaps in enterprise ERP environments?

The most common gaps are field repurposing (ERP fields used for undocumented purposes that create hidden inconsistencies), inconsistent master data across business units, missing historical continuity from system migrations or acquisitions, and definition drift (metrics that changed meaning over time without retroactive adjustment). JD Edwards environments carry heavily customized field usage, tables like F0101 accumulate years of ad-hoc customization. NetSuite multi-subsidiary configurations create consolidation complexity. Vista job cost structures require construction-specific data modeling. Pre-built Application Intelligence packs address these gaps with domain-specific ERP knowledge built into the extraction layer.

Can an organization start AI projects without full data readiness?

Yes, with curated sample datasets to validate use cases before committing to infrastructure investment. But production deployment without an AI-ready foundation fails at documented rates: S&P Global reported in 2025 that 42% of companies were abandoning the majority of their AI initiatives before reaching production. Running targeted proof-of-concepts while building the data foundation in parallel is the most defensible approach. McKinsey’s 2025 State of AI found that high performers were nearly three times as likely to have fundamentally redesigned workflows, reinforcing the importance of getting infrastructure right before scaling.

How long does it take to fix data readiness issues?

Custom data warehouse and integration projects for enterprises running JD Edwards or NetSuite typically require 12-24 months to reach production-quality unified analytics. Organizations using pre-built Application Intelligence with certified ERP connectors compress that timeline to 8-12 weeks. The difference is that certified connectors have already solved the ERP-specific data mapping problems (the JDE field translations, the NetSuite consolidation logic, the Vista job cost structures) that consume the majority of custom build time. The 8-12 week path delivers a working AI-ready data foundation, not a limited proof-of-concept.

What is the difference between a data warehouse and an AI-ready data foundation?

A traditional data warehouse provides one refined layer of clean, business-ready data for BI reporting. An AI-ready data foundation adds raw data access, historical granularity, and workload flexibility that ML training and inference require. The key architectural element is the data lakehouse, medallion architecture running bronze, silver, and gold layers that progressively refine raw source data. BI tools query gold for reporting. ML models access bronze and silver for training data at the granularity they need. Both workloads run from a single governed platform on Microsoft Fabric or Databricks.

How do data silos affect AI and machine learning projects?

Data silos block the cross-functional datasets that give enterprise AI its practical value. A demand forecasting model needs purchasing data, inventory records, and sales history together. A customer health model needs support interactions, billing history, and product usage combined. Models built in siloed environments train on incomplete representations of business reality, producing outputs that contradict what experienced operations teams know. Breaking down data silos through an integrated data lakehouse is the single most impactful structural change an enterprise can make for data AI readiness.

What role does data governance play in AI readiness?

Data governance keeps an AI-ready data foundation functional over time. Without it, even well-built pipelines degrade: formats change, field definitions drift, metric calculations diverge across teams. For AI specifically, governance extends beyond standard quality monitoring to include data lineage tracking, model drift detection, and access controls that prevent sensitive data from entering training pipelines inappropriately. Enterprises running JD Edwards, NetSuite, or Vista need governance frameworks that account for ERP-specific data changes (fiscal year configurations, acquisition-driven entity additions, business unit retirements) so historical data stays interpretable for ML workloads over time.